Google’s New Search Console URL Inspection API: What It Is & How to Use It

To make things worse, Google only allows you to inspect one URL at a time to diagnose potential issues on your website (this is done within Google Search Console). Luckily, there is now a faster way to test your...

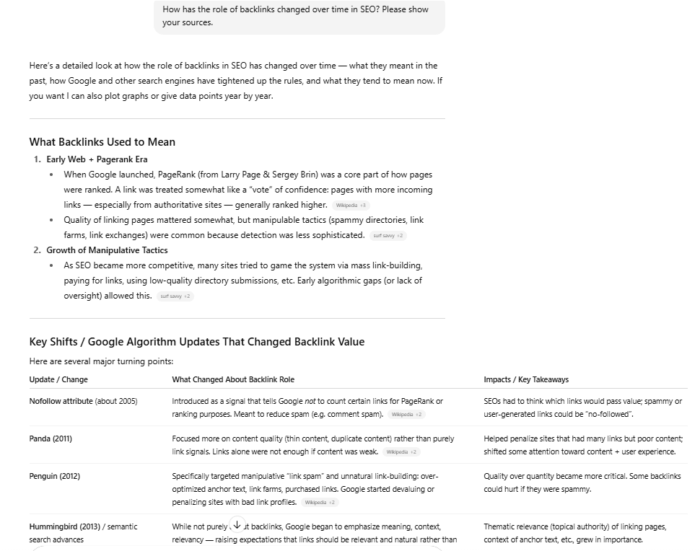

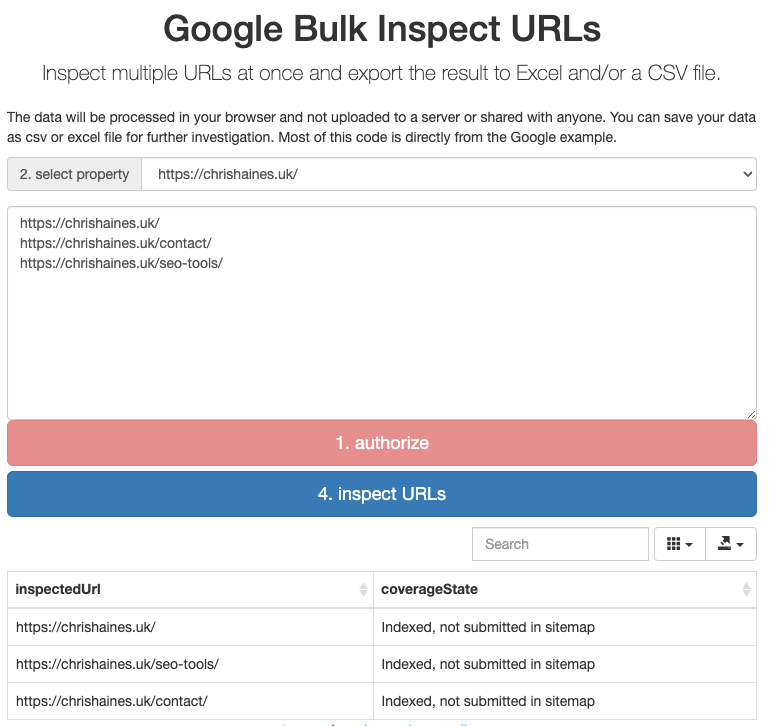

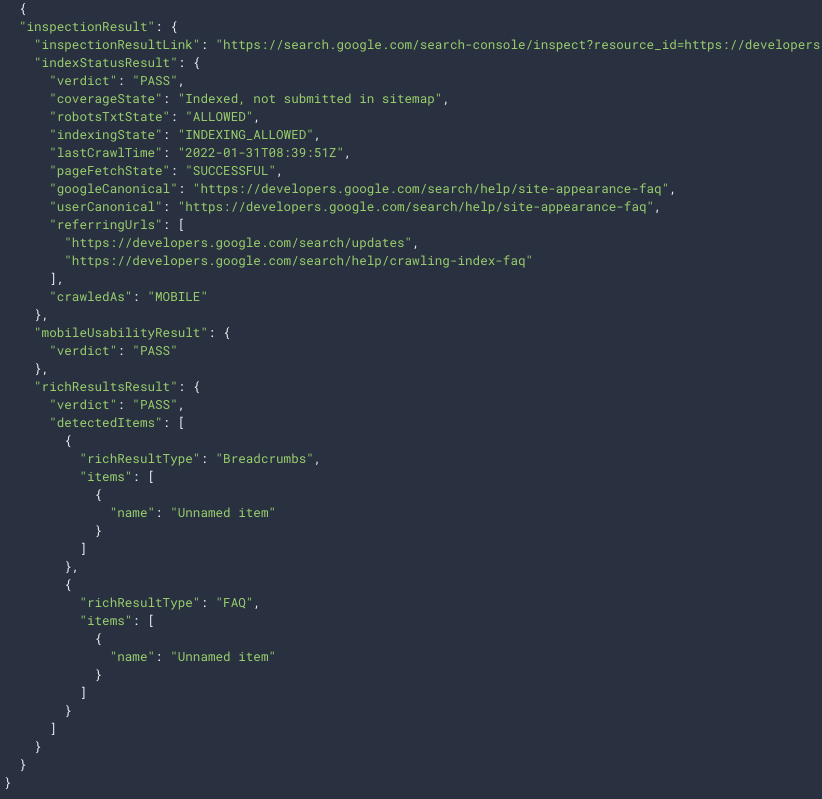

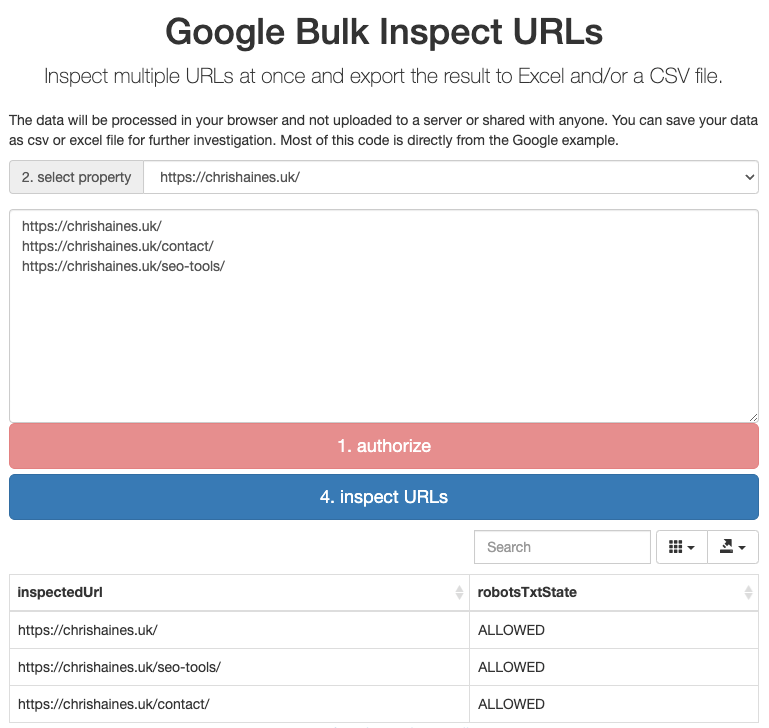

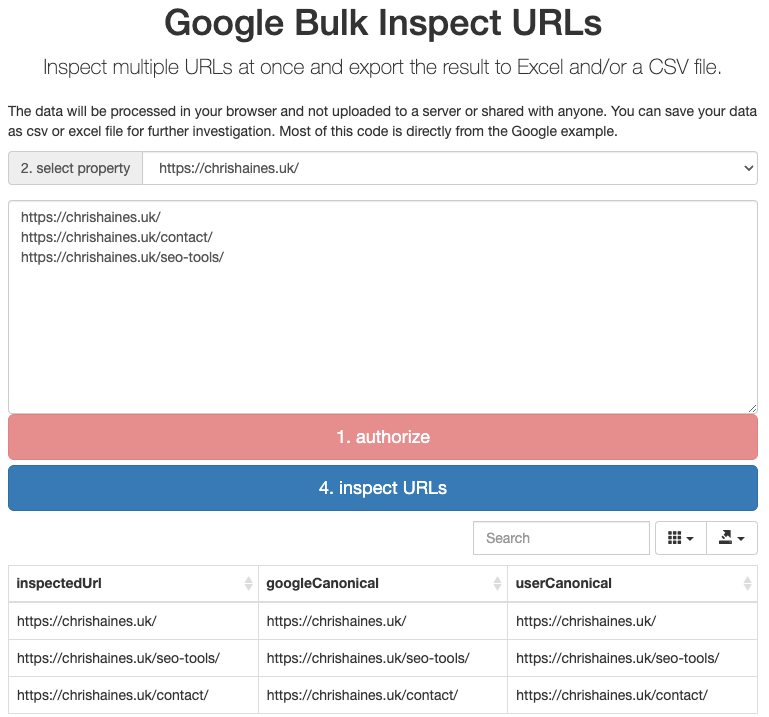

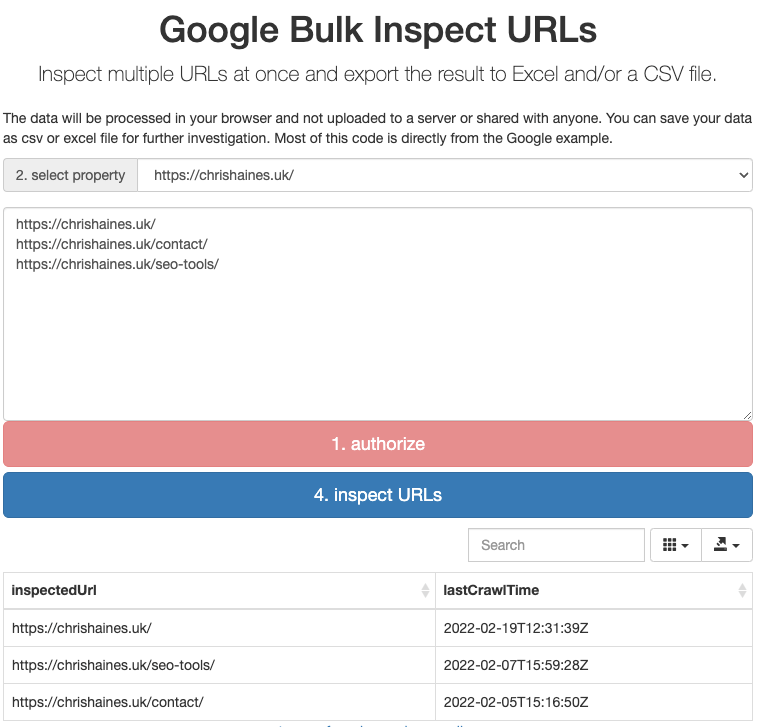

Diagnosing technical issues on your website can be one of the most time-consuming but important aspects of running a website. To make things worse, Google only allows you to inspect one URL at a time to diagnose potential issues on your website (this is done within Google Search Console). Luckily, there is now a faster way to test your website: enter the Google Search Console URL Inspection API… The Google Search Console URL Inspection API is a way to bulk-check the data that Google Search Console has on URLs. Its purpose is to help developers and SEOs more efficiently debug and optimize their pages using Google’s own data. Here’s an example of me using the API to check whether a few URLs are indexed and submitted in my sitemap: The Google Search Console URL Inspection API allows you to pull a wide range of data. Below is a list of some of the most discussed features: With this field, you can understand exactly when Googlebot last crawled your website. This is extremely useful for SEOs and developers to measure the frequency of Google’s crawling of their sites. Previously, you could only get access to this type of data through log file analysis or spot-checking individual URLs with Google Search Console. With this field, you can understand whether you have any robots.txt rules that will block Googlebot. This is something you can check manually, but being able to test it at scale with Google’s own data is a fantastic step forward. In some situations, Google has been known to select a different canonical from the one that has been specified in the code. In this situation, having the ability to compare both (side by side and at scale) using the API is useful for enabling you to make the appropriate changes. This field allows you to understand which user agent is used for a crawl of your site: Mobile/Desktop. The response codes are below for reference: Understanding the pageFetchState can help you diagnose server errors, not found 4xxs, soft 404s, redirection errors, pages blocked by robots.txt, and invalid URLs. A list of responses is below for reference. The indexing state tells you the current status of indexation for the URLs. Apart from the more obvious Pass and Fail responses, there are other responses: This gives you detail on whether a URL has been submitted in your sitemap and indexed. This allows you to see where each page is linked from, according to Google. This enables you to understand which URLs are included in the sitemap(s). You can also use the API to inspect your AMP site—if you have one. Using the Google Search Console URL Inspection API involves making a request to Google. The request parameters you need to define are the URL that you want to inspect and also the URL of the property in Google Search Console. The request body contains data with the following structure: If you are curious to learn more about how to use the API, Google has extensive documentation about this. Below is an example of the type of response you can get from the API: If you’re not comfortable with code or just want to give it a go straight away, you can use valentin.app’s free Google Bulk Inspect URLs tool. The tool provides a quick way to query the API without any coding skills! Here’s how to use it. You can: In theory, the Google Search Console URL Inspection API seems like a great way to understand more about your website. However, you can pull so much data that it’s difficult to know where to start. So let’s look at a few examples of use cases. Site migrations can cause all kinds of issues. For example, developers can accidentally block Google from crawling your site or certain pages via robots.txt. Luckily, the Google Search Console URL Inspection API makes auditing for these issues a doddle. For example, you can check whether you’re blocking Googlebot from crawling URLs in bulk by calling robotsTxtState. Here is an example of me using the Google Search Console URL Inspection API (via valentin.app) to call robotsTxtState to see the current status of my URLs. As you can see, these pages are not blocked by robots.txt, and there are no issues here. TIP Site migrations can sometimes lead to unforeseen technical SEO issues. After the migration, we recommend using a tool, such as Ahrefs’ Site Audit, to check your website for over 100 pre-defined SEO issues. If you make a change to the canonical tags across your site, you will want to know whether or not Google is respecting them. You may be wondering why Google ignores the canonical that you declared. Google can do this for a number of reasons, for example: Below is an example of me using the Google Search Console URL Inspection API to see whether Google has respected my declared canonicals: As we can see from the above screenshot, there are no issues with these particular pages and Google is respecting the canonicals. TIP To quickly see if the googleCanonical matches the userCanonical, export the data from the Google Bulk Inspect URLs tool to CSV and use an IF formula in Excel. For example, assuming your googleCanonical data is in Column A and your userCanonical is in Column B, you can use the formula =IF(A2=B2, “Self referencing”,”Non Self Referencing”) to check for non-matching canonicals. When you update many pages on your website, you will want to know the impact of your efforts. This can only happen after Google has recrawled your site. With the Google Search Console URL Inspection API, you can see the precise time Google crawled your pages by using lastCrawlTime. If you can’t get access to the log files for your website, then this is a great alternative to understand how Google crawls your site. Here’s an example of me checking this: As you can see in the screenshot above, lastCrawlTime shows the date and time my website was crawled. In this example, the most recent crawl by Google is the homepage. Understanding when Google recrawls your website following any changes will allow you to link whether or not the changes you made have any positive or negative impact following Google’s crawl. Although the Google Search Console URL Inspection API is limited to 2,000 queries per day, this query limit is determined by Google Property. This means you can have multiple properties within one website if they are verified separately in Google Search Console, effectively allowing you to bypass the limit of 2,000 queries per day. Google Search Console allows you to have 1,000 properties in your Google Search Console account, so this should be more than enough for most users. Another potential limiting factor is you can only run the Google Search Console URL Inspection API on a property that you own in Google Search Console. If you don’t have access to the property, then you cannot audit it using the Google Search Console URL Inspection API. So this means auditing a site that you don’t have access to can be problematic. Accuracy of the data itself has been an issue for Google over the last few years. This API gives you access to that data. So arguably, the Google Search Console URL Inspection API is only as good as the data within it. As we have previously shown in our study of Google Keyword Planner’s accuracy, data from Google is often not as accurate as people assume it to be. The Google Search Console URL Inspection API is a great way for site owners to get bulk data directly from Google on a larger scale than what was previously possible from Google Search Console. Daniel Waisberg and the team behind the Google Search Console URL Inspection API have definitely done a great job of getting this released into the wild. But one of the criticisms of the Google Search Console URL Inspection API from the SEO community is that the query rate limit is too low for larger sites. (It is capped at 2,000 queries per day, per property.) For larger sites, this is not enough. Also, despite the possible workarounds, this number still seems to be on the low side. What’s your experience of using the Google Search Console URL Inspection API? Got more questions? Ping me on Twitter. 🙂What is the Google Search Console URL Inspection API?

What type of data can you get from the Google Search Console URL Inspection API?

lastCrawlTime

robotsTxtState

googleCanonical and userCanonical

crawledAs

pageFetchState

FieldWhat it means PAGE_FETCH_STATE_UNSPECIFIED Unknown fetch state SUCCESSFUL Successful fetch SOFT_404 Soft 404 BLOCKED_ROBOTS_TXT Blocked by robots.txt NOT_FOUND Not found (404) ACCESS_DENIED Blocked due to unauthorized request (401) SERVER_ERROR Server error (5xx) REDIRECT_ERROR Redirection error ACCESS_FORBIDDEN Blocked due to access forbidden (403) BLOCKED_4XX Blocked due to other 4xx issue (not 403, 404) INTERNAL_CRAWL_ERROR Internal error INVALID_URL Invalid URL indexingState

coverageState

referringUrls

Sitemap

Other uses for the API

How to use the Google Search Console URL Inspection API step by step

How can you use the Google Search Console URL Inspection API in practice?

1. Site migration – diagnosing any technical issues

2. Understand if Google has respected your declared canonicals

3. Understand when Google recrawls after you make changes to your site

FAQs

How to get around the Google Search Console URL Inspection API limits?

Can I use the Google Search Console URL Inspection API on any website?

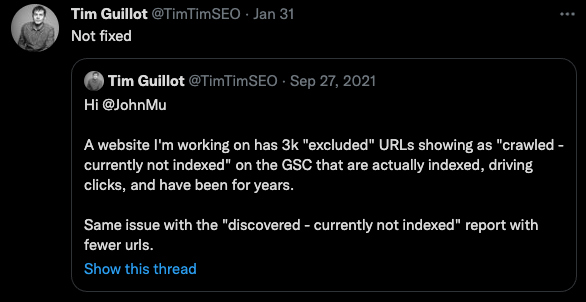

How accurate is the data?

Final thoughts

MikeTyes

MikeTyes