How High-Growth Companies Actually Measure Marketing

Key Takeaways Most marketing leaders know the challenge of marketing attribution well: you have dashboards full of data, but the numbers don’t reliably answer which investments are actually driving growth. The instinct is to search for a better tool,...

Key Takeaways

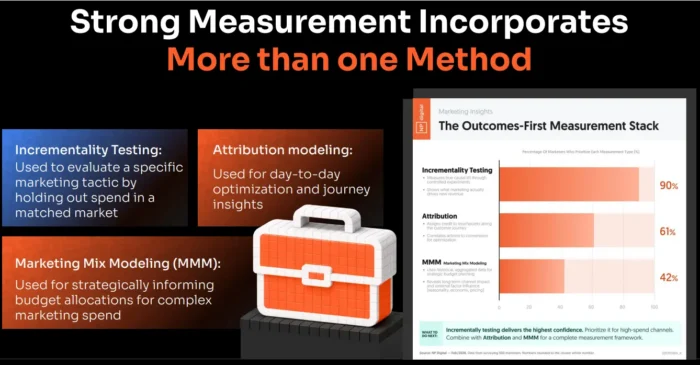

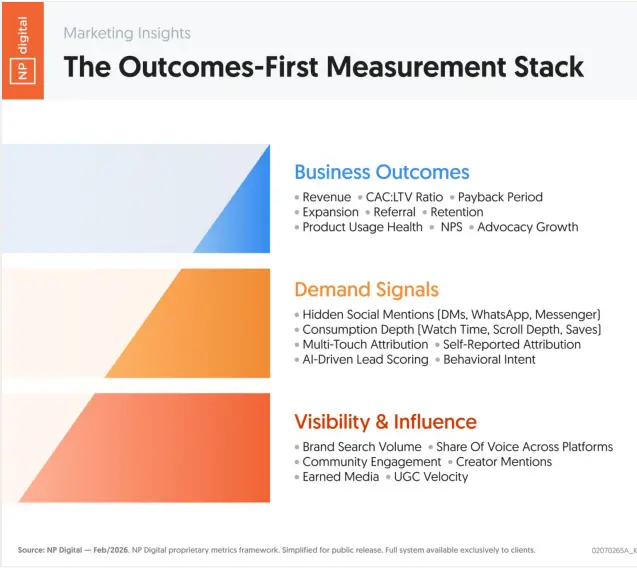

No single measurement method can answer all the questions modern marketing leaders face. A layered stack combining multiple tools is necessary. The challenge of marketing attribution is structural: it assigns credit to touchpoints but cannot prove causality. It works best for tactical optimization, not strategic decisions. Marketing mix modeling identifies marginal returns and channel saturation, helping guide long-term budget allocation. Incrementality testing is the most reliable way to determine whether marketing activity actually created outcomes, rather than captured demand that already existed. Organizing measurement teams into pioneers, settlers, and planners ensures each type of work gets the right standards and decision-making speed.Most marketing leaders know the challenge of marketing attribution well: you have dashboards full of data, but the numbers don’t reliably answer which investments are actually driving growth. The instinct is to search for a better tool, a smarter model, or a more accurate attribution system. But the organizations getting measurement right have moved past that instinct.

They have stopped looking for a single source of truth. The challenge of marketing attribution is part of a broader problem: modern marketing environments are too complex for one method to cover everything. Discovery happens across too many platforms, buyer journeys are too fragmented, and privacy changes have eroded too much signal for any single tool to give a complete picture.

What works instead is a layered approach. Different measurement methods answer different questions, and high-growth organizations combine them deliberately. Marketing mix modeling guides strategic budget allocation. Incrementality testing validates whether a specific activity caused a result. Platform data handles day-to-day campaign optimization. Each plays a defined role. None of them works as a standalone strategy.

This is the second piece in a three-part series on modern marketing measurement. The first part examined why traditional metrics like traffic, rankings, and ROAS are becoming less reliable. This piece covers how to build a measurement system that actually supports growth decisions.

Why No Single Measurement Method Works Anymore

The digital marketing attribution tools most teams rely on were built for a different environment. They worked well when user journeys were relatively linear, cookies tracked reliably across sessions, and most discovery happened through channels that were easy to log. That environment is gone.

Today, a buyer might encounter a brand through an AI-generated answer, research it on YouTube, discuss it in a private message thread, and convert through a branded search three weeks later. The attribution system credits the last touchpoint. The channels that actually shaped the decision get little or nothing.

This is the core structural problem. Marketing attribution models are designed to assign credit, not establish cause. Even sophisticated multi-touch attribution marketing approaches still operate within the same fundamental constraint: they can show which touchpoints preceded a conversion, but they cannot prove that removing any of them would have changed the outcome.

What high-growth organizations have recognized is that different measurement tools answer different questions. Attribution modeling answers: which touchpoints were present before a conversion? Marketing mix modeling answers: where are marginal returns strongest across channels over time? Incrementality testing answers: did this specific activity actually change outcomes?

Each question matters. Each requires a different approach. According to NP Digital research, 90 percent of high-growth marketers prioritize incrementality testing, 61 percent use attribution modeling, and 42 percent use marketing mix modeling. The most effective teams use all three, weighted by the decision at hand.

Marketing Mix Modeling as Strategic Guidance

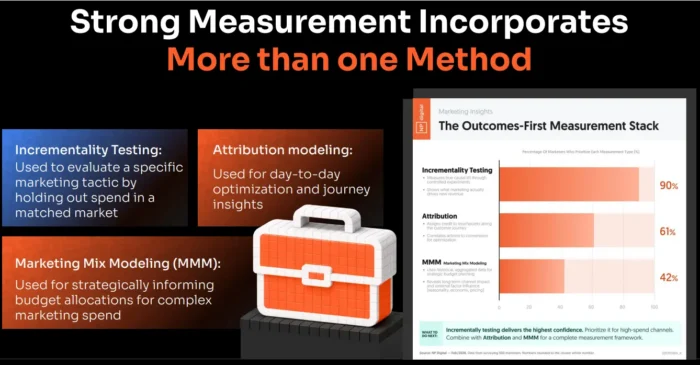

Marketing mix modeling, or MMM, takes a different approach to measurement than attribution. Rather than tracking individual user journeys, it uses aggregated historical data to model the relationship between marketing spend and business outcomes across channels over time. The result is a view of marginal returns that attribution systems cannot provide.

MMM is most useful for identifying where each additional dollar of spend in a channel produces diminishing returns. A channel running at a strong blended ROAS may look efficient in a dashboard while the last 30 percent of its budget is generating negligible incremental revenue. MMM surfaces that inefficiency. It also helps identify cross-channel effects, such as how video or brand investment upstream affects conversion rates in paid search downstream.

For strategic budget allocation, this makes MMM the most reliable tool available. It does not require user-level tracking, which means privacy changes and cookie deprecation do not erode its accuracy the way they do for attribution. Quarterly MMM runs can consistently improve long-term budget decisions even when day-to-day attribution signals are noisy.

MMM does have real limits. It struggles to quantify upper-funnel brand building accurately, because the lag between a brand impression and a downstream conversion is too long and too indirect for historical correlations to capture cleanly. Organizations using MMM for strategic guidance while supplementing it with brand tracking and perception studies get the most complete picture.

<h2> Incrementality Testing as the Causal Engine </h2>

If MMM provides strategic direction, incrementality testing provides causal proof. The question it answers is specific: would this outcome have happened if this marketing activity had not occurred? That is a fundamentally different question from what attribution models ask, and the answer is far more useful for deciding where to invest.

The most common incrementality approaches include geo experiments, holdout tests, and campaign pauses. In a geo experiment, matched geographic markets are identified and spend is withheld in one group while maintained in another. The difference in outcomes between the two groups isolates the causal lift from the marketing activity. Holdout tests apply the same logic at the audience level. Campaign pauses, while cruder, can also reveal whether results drop when spend stops.

For teams running Amazon attribution or other marketplace-based measurement, incrementality testing is especially valuable because platform-reported conversions often reflect demand that already existed rather than demand the campaign created.

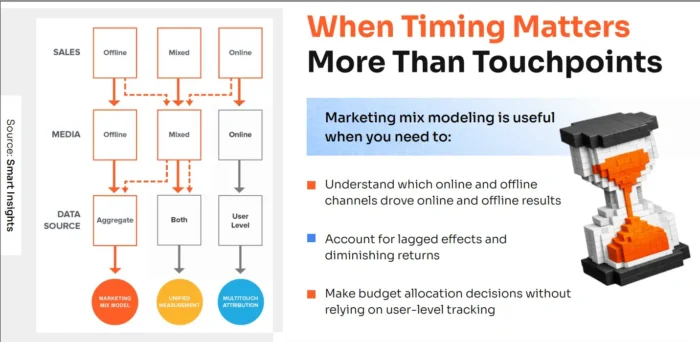

NP Digital research tracking incremental versus attributed conversions across channels found meaningful gaps in almost every case. Organic social showed 13 percent incremental lift against 3 percent attributed lift. Paid social showed 17 percent incremental lift against 24 percent attributed, suggesting attribution was over-crediting that channel. These gaps directly affect where budget should go, and they are invisible without incrementality testing.

Incrementality testing requires planning and clean data, but it does not require a large budget. Even a single well-designed geo holdout on a major channel provides more reliable insight into causal impact than months of attribution reporting.

Platform Data Still Matters, But Only for Optimization

Platform dashboards from Google, Meta, and other ad platforms remain useful, but their role is narrower than most teams treat it. The attribution blind spots built into platform reporting are structural, not accidental. Platforms are designed to optimize campaign performance within their own ecosystems. They are not designed to tell you whether that performance changed your business.

For day-to-day decisions, platform data is the right tool. Pacing spend against budget, adjusting bids based on performance signals, identifying creative fatigue, and diagnosing delivery issues all rely on platform metrics. These are operational decisions, and platform data handles them well.

Where platform data becomes unreliable is in strategic decisions. Algorithms optimize toward users most likely to convert, which means they systematically favor demand capture over demand creation. A high ROAS figure in a platform dashboard may reflect an efficient algorithm, not effective marketing.

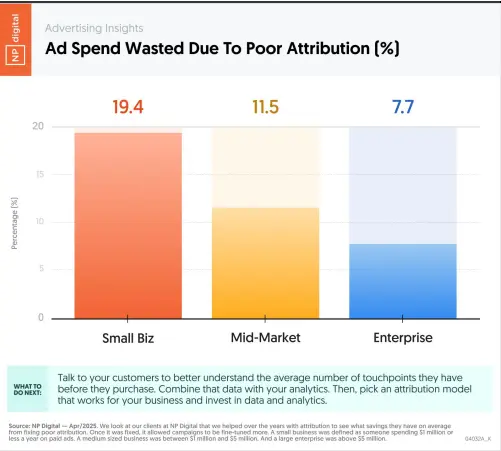

According to NP Digital research, poor attribution costs small businesses an average of 19.4 percent of ad spend, mid-market companies 11.5 percent, and enterprise brands 7.7 percent. That wasted spend is largely invisible in platform reporting because the platforms have no incentive to surface it.

The practical guidance is to use platform metrics for what they are: tactical steering, not strategic truth.

The Pioneer–Settler–Planner Measurement Model

Building a layered measurement system is not just a technical challenge. It is an organizational one. There are three distinct roles that every effective measurement organization needs: pioneers, settlers, and planners.

Pioneers work at the edges of what is currently measurable. They run incrementality experiments, build initial marketing mix models, test geo holdouts, and pressure-test assumptions that may no longer hold. Their work is uncertain by design. Pioneers do not deliver certainty; they deliver direction. Holding them to the same standards of statistical confidence as operational reporting will stop this work before it produces value. Settlers take what emerges from experimentation and turn it into repeatable processes. They refine models, tighten assumptions, and connect insights back to planning decisions. This is where early MMM runs mature into playbooks, and where incrementality test results become frameworks teams can apply consistently. Settlers build trust by translating directional insight into systems that can actually be run. Planners keep daily operations running. They rely on platform data, attribution signals, and conversion mechanics to manage spend in real time. This layer is necessary; without it, execution falls apart. But planners should not be asked to explain long-term growth or diagnose structural shifts in performance. Their focus is optimizing efficiency within channel constraints.The failure mode most organizations fall into is applying planner-level standards of certainty to pioneer-level work. Requiring 95 percent statistical confidence from experiments that need time to develop guarantees that nothing new gets built. A model with 60 percent directional confidence, paired with fast iteration, consistently outperforms a perfect answer that arrives a quarter too late.

How High-Growth Companies Allocate Measurement Resources

NP Digital research tracking measurement practices across Canadian brands found a clear divide between average organizations and high-growth ones. Average teams allocate roughly 65 percent of their measurement influence to platform dashboards and 25 percent to attribution tools, leaving little room for more strategic methods.

High-growth brands with over $750,000 in annual media investment look meaningfully different. Platform dashboard reliance drops to around 45 percent. Attribution tool usage decreases to 15 percent. MMM grows from 5 percent to 20 percent. Incrementality testing reaches 10 percent, and early generative search optimization work accounts for another 10 percent.

These organizations are not abandoning attribution or platform data. They are reweighting them. The logic is straightforward: in markets that keep changing, you build measurement capability where change is happening, not where familiarity feels safe. The goal across all of these methods is directional confidence, meaning enough signal to make better budget decisions faster, not perfect certainty that arrives after the opportunity has closed.

Seven Steps to Evolve Your Measurement System

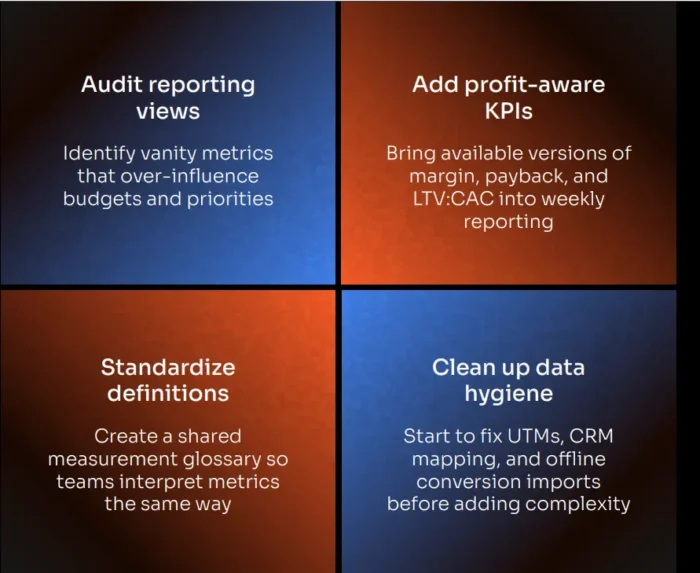

Rebuilding a measurement system does not require replacing everything at once. The organizations that do this well evolve gradually, adding capability in the right order rather than attempting a full overhaul.

Map your current measurement inputs. List every tool and data source your team uses and identify where each one sits: operational platform data, attribution modeling, MMM, or incrementality. Most teams discover they are heavily concentrated in the first two. Identify the decision gaps. Be explicit about which strategic questions your current stack cannot answer. The challenge of marketing attribution is most visible here: where are you making budget decisions based on blended ROAS without visibility into marginal returns? Where are you crediting channels that may just be capturing existing demand? Introduce basic modeling. Even a simple quarterly MMM run provides more strategic direction than attribution alone. Start with your highest-spend channels and the business outcomes most directly tied to revenue. Run your first incrementality test. Pick one major channel and design a geo holdout or holdout audience test. The goal is not perfection; it is building the organizational capability and comfort with this type of measurement. Adapt governance expectations. Attribution reports will not disappear from leadership reviews overnight. Running a parallel track that shows incrementality and MMM findings alongside attribution data builds confidence in the new approach without requiring a full transition. Build processes gradually. Settlers turn pioneer experiments into repeatable workflows. Each incrementality test should produce a documented methodology that makes the next one faster and cheaper. Increase decision cadence. One of the advantages of directional confidence over perfect certainty is speed. Weekly budget adjustments based on incrementality signals and MMM outputs outperform quarterly reallocations based on attribution reports.

FAQs

What Is Marketing Attribution?

Marketing attribution is the process of assigning credit to the marketing touchpoints that contributed to a conversion. Common marketing attribution models include last-click, first-click, linear, and data-driven attribution. Each assigns credit differently across the customer journey. Attribution is most useful for optimizing campaign performance within channels, but it cannot establish whether marketing caused a business outcome.

How Do You Measure Marketing Attribution?

Attribution is measured by connecting conversion data to the touchpoints that preceded it, using tracking pixels, UTM parameters, and CRM data to map the path. Marketing attribution software platforms automate this process and offer different attribution models to choose from. The key limitation to understand is that all attribution approaches assign credit based on correlation, not causality.

Which Is the Best Software for Tracking Marketing Attribution?

The best marketing attribution software depends on your business model and measurement goals. Google Analytics 4 and platform-native dashboards handle basic attribution well. Tools like Northbeam, Triple Whale, and Rockerbox are built for direct-response and e-commerce contexts. For strategic decisions, attribution software works best when paired with MMM and incrementality testing rather than used in isolation.

Conclusion

The challenge of marketing attribution is not a problem that better software alone solves. It is a structural limitation of what attribution can do. Credit assignment and causal proof are different things, and conflating them leads to budget decisions that favor demand capture over demand creation.

High-growth organizations have addressed this by building layered measurement systems where each tool plays a defined role: platform data for operational steering, attribution for tactical signals, MMM for strategic allocation, and incrementality testing for causal validation. The next piece in this series examines how marketing leaders use these signals together to decide where the next dollar of investment should go.

If you want to go deeper on where attribution breaks down before moving to that piece, this breakdown of marketing attribution blind spots covers the specific failure modes in detail. For a broader view of how to connect measurement to revenue decisions, this guide to digital marketing attribution is a useful reference.

KickT

KickT

![The Overlooked Traffic Drop Caused by AI Overviews [Webinar] via @sejournal, @lorenbaker](https://www.searchenginejournal.com/wp-content/uploads/2025/05/featured-1-575.png)

.jpg&h=630&w=1200&q=100&v=477daa9eb8&c=1)