What is AI bias? [+ Data]

Our State of AI Survey Report found that one of the top challenges marketers face when using generative AI is its ability to be biased. And marketers, sales professionals, and customer service people report hesitating to use AI tools...

![What is AI bias? [+ Data]](https://blog.hubspot.com/hubfs/ai%20bias.png#keepProtocol)

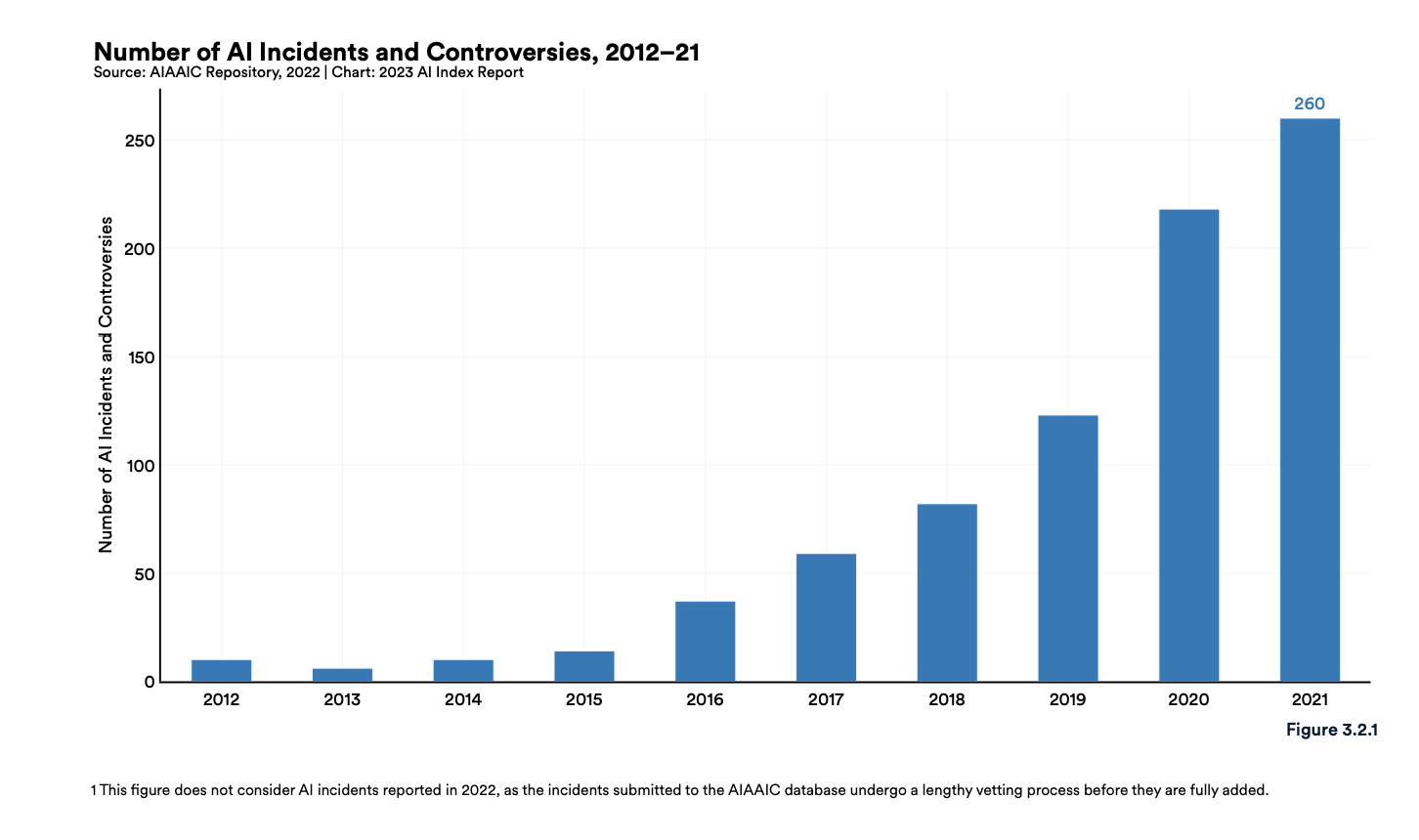

Our State of AI Survey Report found that one of the top challenges marketers face when using generative AI is its ability to be biased. And marketers, sales professionals, and customer service people report hesitating to use AI tools because they can sometimes produce biased information. It’s clear that business professionals are worried about AI being biased, but what makes it biased in the first place? In this post, we’ll discuss the potential for harm in using AI, examples of AI being biased in real life, and how society can mitigate potential harm. AI bias is the idea that machine learning algorithms can be biased when carrying out their programmed tasks, like analyzing data or producing content). AI is typically biased in ways that uphold harmful beliefs, like race and gender stereotypes. According to the Artificial Intelligence Index Report 2023, AI is biased when it produces outputs that reinforce and perpetuate stereotypes that harm specific groups. AI is fair when it makes predictions or outputs that don’t discriminate or favor any specific group. In addition to being biased in prejudice and stereotypical beliefs, AI can also be biased because of: AI is biased because society is biased. Since society is biased, much of the data AI is trained on contains society’s biases and prejudices, so it learns those biases and produces results that uphold them. For example, an image generator asked to create an image of a CEO might produce images of white males because of the historical bias in unemployment in the data it learned from. As AI becomes more commonplace, a fear among many is that it has the potential to scale the biases already present in society that are harmful to many different groups of people. The AI, Algorithmic, and Automation Incidents Controversies Repository (AIAAIC) says that the number of newly reported AI incidents and controversies was 26 times greater in 2021 than in 2012. Let’s go over some examples of AI bias. Mortgage approval rates are a great example of prejudice in AI. Algorithms have been found to be 40-80% more likely to deny borrowers of color because historical lending data disproportionately shows minorities being denied loans and other financial opportunities. The historical data teaches AI to be biased with each future application it receives. There’s also potential for sample size bias in medical fields. Say a doctor uses AI to analyze patient data, uncover patterns, and outline care recommendations. If that doctor primarily sees White patients, the recommendations aren’t based on a representative population sample and might not meet everyone's unique medical needs. Some businesses have algorithms that result in real-life biased decision-making or have made the potential for it more visible. Amazon built a recruitment algorithm trained on ten years of employment history data. The data reflected a male-dominated workforce, so the algorithm learned to be biased against applications and penalized resumes from women or any resumes using the word “women(‘s).” A viral tweet in 2020 showed that Twitter’s algorithm favored White faces over Black ones when cropping pictures. A White user repeatedly shared pictures featuring his face and that of a Black colleague and other Black faces in the same image, and it was consistently cropped to show his face in image previews. Twitter acknowledged the algorithm's bias and said, “While our analyses to date haven’t shown racial or gender bias, we recognize that the way we automatically crop photos means there is a potential for harm. We should’ve done a better job of anticipating this possibility when we were first designing and building this product.” Scientists recently conducted a study asking robots to scan people's faces and categorize them into different boxes based on their characteristics, with three boxes being doctors, criminals, and homemakers. The robot was biased in its process and most often identified women as homemakers, Black men as criminals, Latino men as janitors, and women of all ethnicities were less likely to be picked as doctors. Intel and Classroom Technology’s Class software has a feature that monitors students’ faces to detect emotions while learning. Many have said different cultural norms of expressing emotion as a high probability of students' emotions being mislabeled. If teachers use these labels to talk with students about their level of effort and understanding, students can be penalized over emotions they’re not actually displaying. AI ethics is a hot topic. This is understandable because AI’s bias has been demonstrated in real life in many different ways. Beyond being biased, AI can spread damaging misinformation, like deepfakes, and generative AI tools can even produce factually incorrect information. What can be done get a better grasp on AI and reduce the potential bias? AI and interest in AI are only growing, so the best way to stay on top of the potential for harm is to stay informed on how it can perpetuate harmful biases and take action to ensure your use of AI doesn't add more fuel to the fire. Want to learn more about artificial intelligence? Check out this learning path.

What is AI bias?

How does AI bias reflect society's bias?

AI Bias Examples

1. Amazon’s Recruitment Algorithm

2. Twitter Image Cropping

3. Robot’s Racist Facial Recognition

4. Intel and Classroom Technology’s Monitoring Software

What can be done to fix AI bias?

It's very possible to use AI responsibly.

Konoly

Konoly

![What is a Media Mix & The Most Effective Types [HubSpot Blog Data]](https://blog.hubspot.com/hubfs/media-mix.jpg#keepProtocol)