Meta, TikTok and YouTube may finally have to start sharing data with researchers

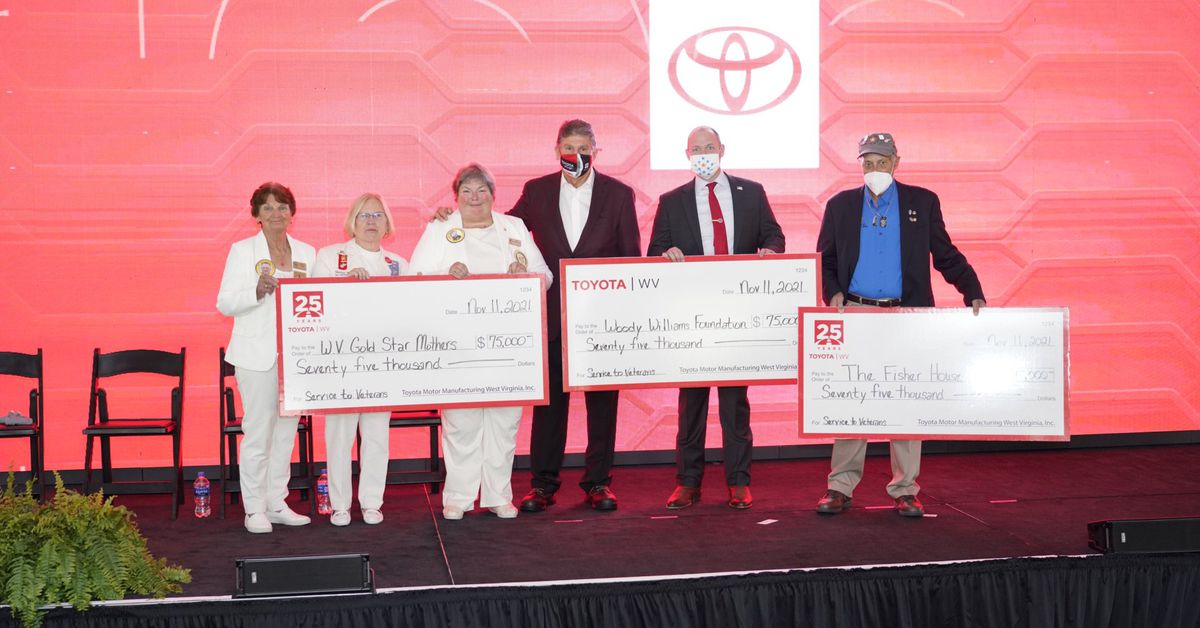

Sen. Chris Coons, who led Wednesday’s hearing on tech transparency, during a previous hearing in March. | Photo by Drew Angerer/Getty ImagesOn Wednesday, Congress was treated to the unfamiliar spectacle of highly intelligent people, talking with nuance, about platform...

On Wednesday, Congress was treated to the unfamiliar spectacle of highly intelligent people, talking with nuance, about platform regulation. The occasion was a hearing, titled “Platform Transparency: Understanding the Impact of Social Media,” and it served as a chance for members of the Senate Judiciary Committee to consider the necessity of legislation that would require big tech platforms to make themselves available for study by qualified researchers and members of the public.

One such piece of legislation, the Platform Transparency and Accountability Act, was introduced in December by (an ever-so-slightly) bipartisan group of senators. One of those senators, Chris Coons of Delaware, led the Wednesday hearing; another, Sen. Amy Klobuchar of Minnesota, was present as well. Over a delightfully brisk hour and forty minutes, Coons and his assembled experts explored the necessity of requiring platforms to disclose data and the challenges of requiring them to do so in a constitutional way.

To the first point — why is this necessary? — the Senate called Brandon Silverman, co-founder of the transparency tool CrowdTangle. (I interviewed him here in March.) CrowdTangle is a tool that allows researchers, journalists and others to view the popularity of links and posts on Facebook in real time, and understand how they are spreading. Researchers studying the effects of social networks on democracy say we would benefit enormously from having similar insight into the spread of content on YouTube, TikTok, and other huge platforms.

Silverman was eloquent in describing how Facebook’s experience of acquiring CrowdTangle only to find that it could be used to embarrass the company made other platforms less likely to undertake similar voluntary measures to improve public understanding.

“Above all else, the single biggest challenge is that in the industry right now, you can simply get away without doing any transparency at all,” said Silverman, who left the company now known as Meta in October. “YouTube, TikTok, Telegram, and Snapchat represent some of the largest and most influential platforms in the United States, and they provide almost no functional transparency into their systems. And as a result, they avoid nearly all of the scrutiny and criticism that comes with it.”

He continued: “That reality has industry-wide implications, and it frequently led to conversations inside Facebook about whether or not it was better to simply do nothing, since you could easily get away with it.”

When we do hear about what happens inside a tech company, it’s often because a Frances Haugen-type employee decides to leak it. The overall effect of that is to paint a highly selective, irregular picture of what’s happening inside the biggest platforms, said Nate Persily, a professor at Stanford Law School who also testified today.

“We shouldn’t have to wait for whistleblowers to whistle,” Persily said. “This type of transparency legislation is about empowering outsiders to get a better idea of what’s happening inside these firms.”

So what would the legislation now under consideration actually do? The Stanford Policy Center had a nice recap of its core features:

*Allows researchers to submit proposals to the National Science Foundation. If the NSF supports a proposal, social-media platforms would be required to furnish the needed data, subject to privacy protections that could include anonymizing it or “white rooms” in which researchers could review sensitive material.

*Gives the Federal Trade Commission the authority to require regular disclosure of specific information by platforms, such as data about ad targeting.

*Commission could require platforms create basic research tools to study what content succeeds, similar to the basic design of the Meta-owned CrowdTangle.

*Bars social-media platforms from blocking independent research initiatives; both researchers and platforms would be given a legal safe harbor related to privacy concerns.

To date, much of the focus on regulating tech platforms has found members of Congress attempting to regulate speech, at both the individual and corporate level. Persily argued that starting instead with this kind of forced sunlight might be more effective.

“Once platforms know they’re being watched, it will change their behavior,” he said. “They will not be able to do certain things in secret that they’ve been able to up till now.” He added that platforms would likely change their products in response to heightened scrutiny as well.

OK, fine, but what are the tradeoffs? Daphne Keller, director of the program on Platform regulation at Stanford, testified that Congress should consider carefully what sorts of data it requires platforms to disclose. Among other things, any new requirements could be exploited by law enforcement to get around existing limits.

“Nothing about these transparency laws should change Americans’ protections under the Fourth Amendment or laws like the Stored Communications Act, and I don’t think that’s anyone’s intention here,” she said. “But clear drafting is essential to ensure that government can’t effectively bypass Fourth Amendment limits by harnessing the unprecedented surveillance power of private platforms.”

There are also First Amendment concerns around these sort of platform regulations, she noted, pointing to the failure in court of two recent state laws designed to force platforms to carry speech that violates their policies.

“I want transparency mandates to be constitutional, but there are serious challenges,” Keller said. “And I hope that you will put really good lawyers on that.”

Unfortunately, into every Senate hearing, a little Ted Cruz must fall. The Texas senator was the only participant on Wednesday to exhaust his allotted speaking time without asking a single question of the experts present. Cruz expressed great confusion about why he got relatively few new Twitter followers in the days before Elon Musk said he was going to buy it, but then got many more after the acquisition was announced.

“It is obvious someone flipped the switch,” the Texas Republican said. “The governors they had on that said ‘silence conservatives’ were flipped off. That is the only rational explanation.” (I know the word “governors” is used somewhat unconventionally here, but I listened to the tape five times and that’s what I heard.)

The actual explanation is that Musk has lots of conservative fans, they flocked back to the platform when they heard he was buying it, and from there Twitter’s recommendation algorithms kicked into gear.

But here even I must sympathize with Cruz, for all the reasons that today’s hearing was called in the first place. Absent legislation that requires platforms to explain how they work in greater detail, some people are always going to believe in the dumbest explanations possible. (Especially when those explanations serve a political purpose.) Cruz is what you get in a world with only voluntary transparency on the part of the platforms.

That said, we should still keep our expectations in check — there are limits on what platform disclosures can do for our discourse. It seems quite possible that you could explain exactly how Twitter works to Ted Cruz, and he would either fail to comprehend or willfully misunderstand you for political reasons. And even people who seek to understand recommender systems in good faith may fail to understand explanations on a technical level. “Transparency” isn’t a cure-all.

But… it’s a start? And seems much less fraught than lots of other proposed tech regulations, many of which find Congress attempting to regulate speech in ways that seem unlikely to survive First Amendment scrutiny.

Of course, where other countries hold hearings as a prelude to passing legislation, in the United States we typically hold hearings instead of passing legislation. And despite some Republican support for the measure — even Cruz said this one sounded fine to him — there’s no evidence that it’s gathering any particular momentum.

As usual, though, Europe is much further ahead of us. The Digital Services Act, which regulators reached an agreement on in April, includes provisions that would require big platforms to share data with qualified researchers. The law is expected to go into effect by next year. And so even if Congress dithers after today, transparency is coming to platforms one way or another. Here’s hoping it can begin to answer some very important questions.

JaneWalter

JaneWalter