Google search head says 'we won't always find' mistakes with AI products and have to take risks rolling them out

Liz Reid, Google's vice president of search, told employees at a recent all-hands meeting that the company has to take risks in rolling out AI search tools.

Liz Reid, vice president, search, Google speaks during an event in New Delhi on December 19, 2022.

Sajjad Hussain | AFP | Getty Images

Google's new head of search said at an all-hands meeting last week that mistakes will occur as artificial intelligence becomes more integrated in internet search, but that the company should keep pushing out products and let employees and users help find the issues.

"It is important that we don't hold back features just because there might be occasional problems, but more as we find the problems, we address them," Liz Reid, who was promoted to the role of vice president of search in March, said at the companywide meeting, according to audio obtained by CNBC.

"I don't think we should take away from this that we shouldn't take risks," Reid said. "We should take them thoughtfully. We should act with urgency. When we find new problems, we should do the extensive testing but we won't always find everything and that just means that we respond."

Reid's comments come at a critical moment for Google, which is scrambling to keep pace with OpenAI and Microsoft in generative AI. The market for chatbots and related AI tools has exploded since OpenAI introduced ChatGPT in late 2022, giving consumers a new way to seek information online outside of traditional search.

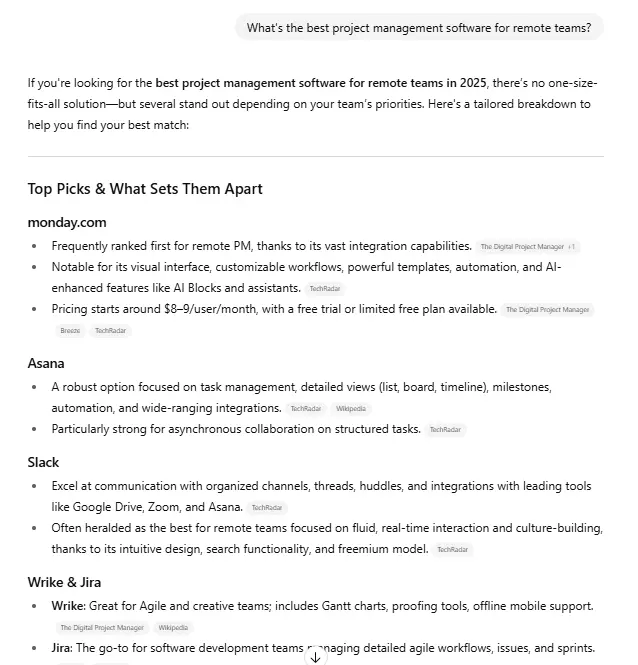

Google's rush to push out new products and features has led to a series of embarrassments. Last month, the company released AI Overview, which CEO Sundar Pichai called the biggest change in search in 25 years, to a limited audience, allowing users to see a summary of answers to queries at the very top of Google search. The company plans to roll the feature out worldwide.

Though Google had been working on AI Overview for more than a year, users quickly noticed that queries were returning nonsensical or inaccurate answers, and they had no way to opt out. Widely circulated results included the false statement that Barack Obama was America's first Muslim president, a suggestion for users to try putting glue in pizza and a recommendation to try eating at least one rock per day.

Google scrambled to fix mistakes. Reid, a 21-year company veteran, published a blog post on May 30, deriding the "troll-y" content some users posted, but admitting that the company made more than a dozen technical improvements, including limiting user-generated content and health advice.

"You may have seen stories about putting glue on pizza, eating rocks," Reid told employees at the all-hands meeting. Reid was introduced on stage by Prabhakar Raghavan, who runs Google's knowledge and information organization.

A Google spokesperson said in an emailed statement that the "vast majority" of results are accurate and that the company found a policy violation on "less than one in every 7 million unique queries on which AI Overviews appeared."

"As we've said, we're continuing to refine when and how we show AI Overviews so they're as useful as possible, including a number of technical updates to improve response quality," the spokesperson said.

The AI Overview miscues fell into a pattern.

Shortly before launching its AI chatbot Bard, now called Gemini, last year, Google executives, were confronted about the challenges posed by ChatGPT, which had gone viral. Jeff Dean, Google's chief scientist and longtime head of AI, said in December 2022 that the company had much more "reputational risk" and needed to move "more conservatively than a small startup" since the chatbots still had many accuracy issues.

But Google went ahead with its chatbot, and was criticized by shareholders and employees for a "botched" launch that, some said, was hastily organized to match the timeline of a Microsoft announcement.

A year later, Google rolled out its AI-powered Gemini image generation tool, but had to pause the product after users discovered historical inaccuracies and questionable responses that circulated widely on social media. Pichai sent a companywide email at the time, saying the mistakes were "unacceptable" and "showed bias."

Red teaming

Reid's posture suggests Google has grown more willing to accept mistakes.

"At the scale of the web, with billions of queries coming in every day, there are bound to be some oddities and errors," she wrote in her recent blog post.

Reid said that some AI Overview queries from users were intentionally adversarial and that many of the worst ones listed were fake.

"People actually created templates on how to get social engagement by making fake AI Overviews so that's an additional thing we're thinking about," Reid said.

She said the company does "a lot of testing ahead of time" as well as "red teaming," which involves efforts to find vulnerabilities in technology before they can be discovered by outsiders.

"No matter how much red teaming we do, we will need to do more," Reid said.

By going live with AI products, Reid said, teams were able to find issues like "data voids," which is when the web doesn't have sufficient data to correctly answer a particular query. They were also able to identify comments from a particular webpage, detect satire and correct spelling.

"We don't just have to understand the quality of the site or the page, we have to understand each passage of a page," Reid said, regarding the challenges the company faces.

Reid thanked employees from various teams that worked on the corrections and emphasized the importance of employee feedback, directing staffers to an internal link to report bugs.

"Anytime you see problems, they can be small, they can be big," she said. "Please file them."

Don’t miss these exclusives from CNBC PRO

Aliver

Aliver