Meta Launches New Llama AI Model, Building Towards the Next Stage

Meta continues to evolve its AI offerings.

Meta has announced its final big AI update for the year, with CEO Mark Zuckerberg launching a new 70 billion parameter Llama 3.3 model, which he says performs almost as well as its 405 billion parameter model, but a lot more efficiently.

The new model will expand the use cases for Meta’s Llama system, and will enable more developers to build on Meta’s open source AI protocols, which have also seen significant take-up.

Zuckerberg says that Llama is now the most adopted AI model in the world, with more than 650 million downloads. Meta is committed to open sourcing its AI tools to facilitate innovation, though that’ll also ensure that Meta becomes the backbone of many more AI projects, which could give it more market power in the long run.

Which is the same for VR, where Meta’s also seeking to solidify its leadership. By working with third parties, Meta can expand its offerings on both fronts, while also establishing its tools as the market standard for the next stage of digital connectivity.

Zuckerberg has also outlined Meta’s plans for a new AI data center in Louisiana, as the company also explores a new undersea cabling project, while Zuck has also noted that Meta AI remains on track to become the most used AI assistant in the world, with 600 million monthly active users.

Though that could be a little misleading. Meta has more than 3 billion users across its family of apps (Facebook, Instagram, Messenger and WhatsApp), and it’s shoved its AI assistant into all of them, while also prompting random prompts for users to generate AI images in each app.

As such, it’s no surprise that Meta AI has over 600 million users. What would be interesting would be data on how long each person is spending chatting to its AI bot, and how often they come back to it.

Because I don’t really see a solid use case for AI assistants in social apps. Like, sure, you can generate an image, but it’s not real, and not an expression of an actual thing that you’ve experienced. You can ask Meta AI questions, but I doubt that has huge appeal for most regular users.

Regardless, Meta’s committed to making its AI tools a thing, though I suspect, again, that the true value of such for the company will come in the next stage, when VR becomes a bigger consideration for more users.

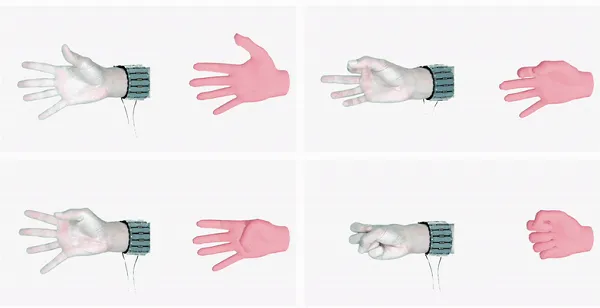

On that front, Meta’s also advanced to the next stage of testing for its wrist-based surface electromyography (sEMG) device, which measures muscle activity from electrical signals in the wrist to enable more intuitive control.

That could be a significant advance for Meta’s wearables push, for both AR and VR applications. And when you view all of Meta’s various projects as building towards this next major shift, they all start to make a lot more sense.

Essentially, I don’t think that any of Meta’s projects are a means unto themselves at this stage, but they’re all guiding users towards the next level. Where Meta increasingly looks set to dominate.

MikeTyes

MikeTyes

![How Nonprofits Can Use TikTok for Growth [Case Study + Examples]](https://blog.hubspot.com/hubfs/tiktok%20for%20nonprofits-1.jpg#keepProtocol)