The Tech SEO Audit for the AI Search Era: How to Maximize Your AI Visibility via @sejournal, @JetOctopus

Unlock the secrets of AI visibility to adapt your website for future search trends and improve technical SEO practices. The post The Tech SEO Audit for the AI Search Era: How to Maximize Your AI Visibility appeared first on...

This post was sponsored by JetOctopus. The opinions expressed in this article are the sponsor’s own.

How do I optimize my site for ChatGPT and Perplexity, not just Google?

How do I know if AI bots are actually crawling my site?

How should my technical SEO strategy change for AI Search?

A significant portion of your site’s search impressions in 2026 are generated by machines researching on behalf of humans.

Those machines don’t care about your keyword rankings. They care whether your:

HTML loads cleanly in under 200 milliseconds Product detail page is reachable in fewer than four clicks Content answers a specific, nine-word question that has never appeared in any keyword research tool in your career.This isn’t speculation. It’s what our server log data across hundreds of enterprise websites is showing us, consistently, since mid-2025.

In This Guide:

1. What's Actually Happening On Your Site2. How To Make Sure ChatGPT, Perplexity & LLMs Can Reach Your Content3. The Technical Audit: Where to Start4. The New KPI: Technical AccessibilityWhat’s Actually Happening On Your Site

My colleague, Stan, flagged a pattern in a Slack message: query lengths were growing at rates that didn’t correlate with human behavior.

A 161% growth rate in 10-word queries year-over-year is not driven by users who suddenly got more verbose. It’s driven by AI agents decomposing a single user prompt into dozens of parallel sub-queries, a process researchers now call “fan-out.”

Query Length Growth in 2025

Image created by JetOctopus, Aggregated GSC data across hundreds of enterprise properties, 2025

Image created by JetOctopus, Aggregated GSC data across hundreds of enterprise properties, 2025

The gradient is the tell. Human search behavior doesn’t scale this cleanly by word count. Machines do. By October 2025, 7-plus-word queries reached nearly 1% of total query volume, roughly triple their historical share.

More revealing than the volume is the CTR. While impression counts for 10-word queries spiked 161%, click-through rate collapsed to 2.26%, down from 8–11% in 2023.

The AI reads your page, extracts the answer, synthesizes it for the user. Your site never gets the visit.

We call these “phantom impressions.” They’re real signals that your content is being evaluated inside AI reasoning chains. If you’re filtering them out of your reporting because they don’t drive traffic, you are flying blind.

The Three Bots Visiting Your Site & Their Impact On SERP Visibility

Not all AI crawlers are equal, and treating them as a single category is the first mistake most technical SEOs make.

Training bots crawl broadly and ignore click depth. A training visit means the AI knows your content exists, not that users will ever see it.

AI search bots drop off quickly beyond two or three clicks from the homepage and typically visit each page only once a month.

AI user bots are initiated when a real person asks a question in ChatGPT, Perplexity, or Claude, and the AI researches the answer on their behalf. These are the only visits that translate to actual AI visibility.

| Bot Type | What Triggers It | Crawl Depth | Impact on AI Visibility |

| Training bots | Model education cycles | Deep — ignores click distance | None directly. Awareness only. |

| AI search bots | New URL discovery & fresh content | Shallow — ~1 visit/month beyond 2–3 clicks | Critical gatekeeper. If it misses a page, user bots won’t find it either. |

| AI user bots | Real user query in ChatGPT / Claude / Perplexity | Selective — driven by speed and structure | High. Closest proxy to an AI impression. |

Your site can receive heavy crawling from training and search bots and still be completely absent from AI-generated answers. If you’re not segmenting AI bot traffic by type in your log analysis, you have no idea which third of the iceberg you’re measuring.

Which SEO Signals Do LLMs Respect?

Robots.txt is your primary lever.

Most major AI platforms (ChatGPT, Claude, Gemini) follow robots.txt directives. Perplexity is a partial exception: PerplexityBot respects robots.txt, but Perplexity-User, the user-triggered bot, does not. Cloudflare confirmed this in an investigation. Most sites haven’t audited their robots.txt with AI access in mind. Do it.

Sitemaps are broadly supported.

ChatGPT, Claude, and PerplexityBot all use XML sitemaps for URL discovery. Keep them accurate.

Signals Best Saved For SEO & Ranking Efforts

These signals below don’t appear to impact AI visibility, but are still key for ranking for queries that still trigger traditional SERPs.

Canonical tags and noindex directives do nothing for AI bots.

AI crawlers don’t build a search index, so they have no use for these meta-signals. Content hidden from Google using noindex is fully visible to ChatGPT’s crawler.

LLM.txt does nothing.

Our log data shows major AI bots don’t read this file. Don’t invest time here.

JavaScript rendering is a critical blind spot.

Most AI crawlers (ChatGPT, Claude, Perplexity) don’t render JavaScript. If your product pages load key content client-side, those agents read an empty shell. Server-side rendering is the only architecture that works universally. The exception is Google Gemini, which uses the same Web Rendering Service as Googlebot.

How To Make Sure ChatGPT, Perplexity & LLMs Can Reach Your Content

AI search bots visit deep pages roughly once a month and drop off sharply beyond three clicks from the homepage. The pages with the most specific, answerable information are often the hardest for agents to reach.

The fix: Elevate your most valuable deep pages through internal linking, ensuring they’re reachable within four clicks.

Pages crawled by training bots but never reached by user bots are your highest-priority targets. Pages AI user bots visit frequently are telling you what to scale: more content covering the same topic cluster and depth.

Optimize Content For Longer, Fan-Out Queries

95% of the queries driving AI citations have zero monthly search volume. They’re synthetic sub-queries generated by AI models. But they show up in GSC: impressions, no clicks, query lengths you’d never target voluntarily.

How To Find Fan Out Query Opportunities

To surface fan out queries that are worth chasing, connect your GSC API to JetOctopus (to bypass the 1,000-row UI limit) and filter for: query length greater than 7 words, impressions under 50, clicks at 0, over the last 3 months. That’s your Fan-Out Opportunity Matrix, the exact questions AI agents are asking about your content.

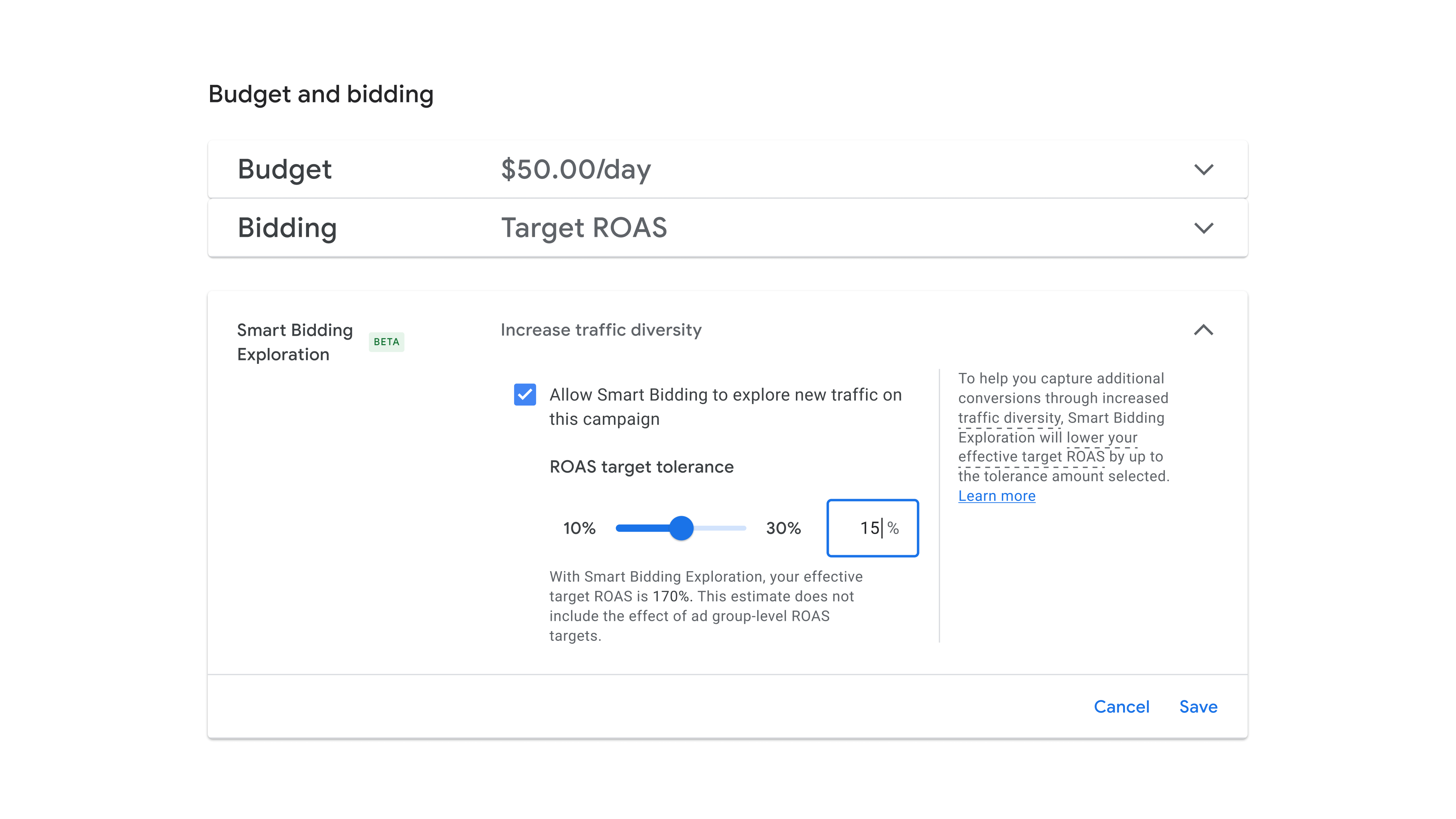

Prompt Types That Fan Out Most

Image created by JetOctopus, 2025

Image created by JetOctopus, 2025

If your content isn’t structured to answer list and comparison queries, with explicit rankings, pros/cons, and side-by-side specs, you’re leaving the highest fan-out surface area unoptimized.

“Product review” intent queries surged from 239 in June 2025 to over 40,000 by September 2025. That 16,000% increase was AI agents systematically harvesting structured opinion data. If your product pages lack this depth, you’re invisible to that harvest.

The Technical Audit: Where to Start

Step 1: Identify AI User Bot Traffic In Logs

Pull raw server logs (Apache/Nginx) and export all lines containing these user agents: OAI-SearchBot and ChatGPT-User, PerplexityBot and Perplexity-User, Claude-SearchBot and Claude-User. Then manually group hits by user-agent patterns and endpoints in a spreadsheet. To distinguish training bots from user bots, you’ll need to maintain your own classification list — one that changes often and isn’t standardized.

In JetOctopus Log Analyzer, this segmentation is built in: filter by bot type (training, search, and user) in a few clicks and immediately see which pages AI user bots visit (your AI-visible content, ready to scale) versus pages training bots hit but user bots never reach (your highest-priority fix targets).

Step 2: Audit Technical Accessibility Of Deep Pages

Pick a sample of deep URLs and check HTML payload size, confirm key content isn’t injected via JavaScript by viewing raw HTML, simulate crawl depth by counting clicks from the homepage, and test load time in Chrome DevTools or Lighthouse. Also check whether important content sits behind accordions or “View More” elements — these require JavaScript execution that AI bots skip entirely. For large sites with thousands of deep pages, this sampling approach misses a lot. AI agents don’t click. If information only appears after user interaction, it doesn’t exist for these crawlers.

Step 3: Clean Up Your Robots.txt

Open your robots.txt and review all Disallow and Allow directives for every user-agent line by line. AI bots follow Disallow rules, so make sure you’re not accidentally blocking important URLs. Manually test key URLs to confirm they aren’t blocked. A 30-minute audit here can prevent you from blocking crawlers you want in, or exposing content you’d rather keep out.

Step 4: Map Your Phantom Impressions

Export data from GSC Performance reports filtered by impressions with zero clicks. Because of the 1,000-row UI limit, you’ll need to use the GSC API or export in chunks by date and query, then merge datasets in spreadsheets or BigQuery. Also factor in query frequency: long queries appearing daily are likely not fan-outs.

Connect your GSC API to JetOctopus to bypass the row limit and build your Fan-Out Opportunity Matrix automatically — the exact questions AI agents are asking about your content, ready to act on.

Step 5: Monitor The Changes

Set up a recurring export process — pull GSC data monthly and compare impressions over time, re-run log analysis scripts and diff bot activity, track Core Web Vitals separately in PageSpeed Insights or CrUX. You’ll end up stitching together multiple data sources with no unified alerting, making it hard to catch regressions early.

JetOctopus Alerts covers exactly this: unified notifications for changes in AI bot activity alongside Googlebot behavior, Core Web Vitals, on-page SEO issues, and SERP efficiency drops, so you catch regressions before they compound.

The New KPI: Technical Accessibility

SEO in 2026 is restructuring around one constraint: can an AI agent crawl, reach, and extract a fact from your 50,000th product page in under 200 milliseconds?

If the answer is no, your rankings, backlinks, and content quality become irrelevant for a growing share of search interactions. The machines are searching. The question is how quickly you can see what’s actually happening.

Start with your logs. Everything else follows from there.

Want to see exactly how AI bots are interacting with your site: which pages they reach, which they skip, and where your fan-out opportunities are hiding? Book a live walkthrough of the JetOctopus platform. We’ll pull your actual log data and show you what your GSC reports aren’t telling you.

Image Credits

Featured Image: Image by JetOctopus. Used with permission.

Hollif

Hollif